What is Google’s PaLM Algorithm?

PaLM stands for Pathways Language Model. This is Google’s new algorithm which aims to improve Google’s Pathways AI architecture. The main purpose of the structure that can take on a million different tasks all at the same time. These range from the breaking down of intricate information and reasoning.

Recently, Google announced a major development that is a step forward in improving its AI system to ensure that it can handle a wide range of activities of complicated learning and reasoning. The intent of PaLm is to outperform existing AI processes and human beings in language and reasoning.

However, we know that technology is not always 100% perfect. So, do leave room for some form of error. According to researchers, there are restrictions that affect language models on a large scale. They have warned that this can lead to unethical outcomes.

Why is Google PaLM Interesting?

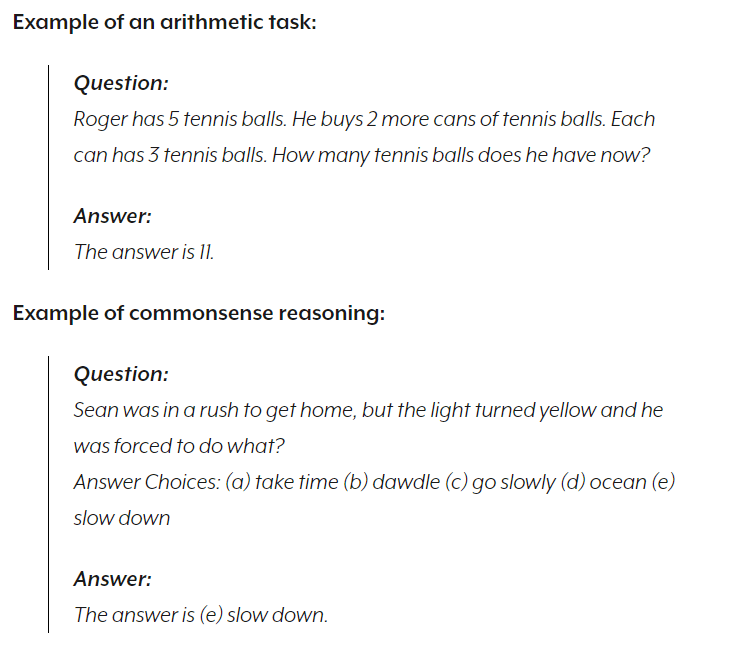

PaLm is interesting because it incorporates few-shot learning. Few-shot learning refers to making predictions based on a limited number of samples. According to researchers, training was completed on a 540-billion parameter, densely active Transformer language model to better understand the impact of scale on few-shot learning.

Purpose of AI PaLm Strategy

Let’s take this back a bit. In October 2021, Google published its desired outcomes for PaLM. This served as the turning point in their continuous research in developing and improving its AI systems. They intended on moving away from their traditional AI systems that were trained to only perform specific tasks.

This is where PaLM comes in; the AI strategy was to solve problems simultaneously by learning how to solve them instead of having thousands of algorithms perform tons of tasks. This was set to make the processing of information and tasks quicker and more efficient.

A good example of this is a model from one task like understanding how aerial pictures can forecast the elevation of a landscape, and for another project, this same information can be used to predict how floodwaters will flow through that specific terrain. The purpose here is to bridge the gap between machine learning and human learning.

What Researchers Have Found

PaLm surpassed the expectations of one of the state-of-the-art AI technologies on their benchmark known as BIG-Bench. This was another one of their collaborative projects. These included well over 150 tasks related to answering questions, translating language, and reasoning capability. As noted, technology is not always 10% perfect; there were multiple areas where it fell short.

However, it is worthwhile noting that the human performance outperformed the PaLM strategy by 35%, particularly on tests pertaining to mathematics. PaLm succeeded in translating from English to other languages better than other languages to English. Researchers said that this could be addressed by placing more emphasis on multilingual data.

Reasoning Ability

Its performance on arithmetic and common-sense reasoning problems was particularly noteworthy. An arithmetic task might look like this: